Estimating the infrastructure requirements for big user in France

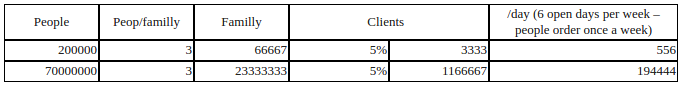

Calculating the anticipated load

For a pilot city of 200,000 people, we estimate 556 clients per day.

For two pilot cities of that size, 1,112 clients per day.

For the whole of France, 194,444 clients per day.

A model food hub in Melbourne serves 11,647 page views to 1,543 users in one day (7.5 views per user).

In the peak hour of this day, they serve 1,611 page views to 201 users (8 views per user).

Within a day, they serve 11,647 / 1,611 = 14% of page views within the peak hour.

Two pilot cities (1,112 users per day)

We expect to serve 1,112 * 8 = 8,896 page views per day.

14% in peak hour = 1,245 page views, or 21 requests per minute (RPM)

Whole of France (194,444 users per day)

We expect to serve 194,444 * 8 = 1,555,552 page views

14% in peak hour = 217,777 page views, or 3,630 RPM

Calculating the OFN server capability

By average response time

OFN Australia shows an average response time of approx 700ms. A crucial part of the work would be lowering this to provide a more responsive site to customers as well as to improve scalability. However, let’s use the current figure to generate a pessimistic estimate.

We have three web server workers, so the server can serve three concurrent requests.

60 / 0.7 x 3 = 257 RPM

By load test

Load testing the Australian production server remotely shows a real-world response rate of 186 RPM.

To take our estimates forward, I’ll use the slower of these estimation methods (186 RPM).

However, I’d expect that with performance optimisation work, we could greatly reduce the average response time.

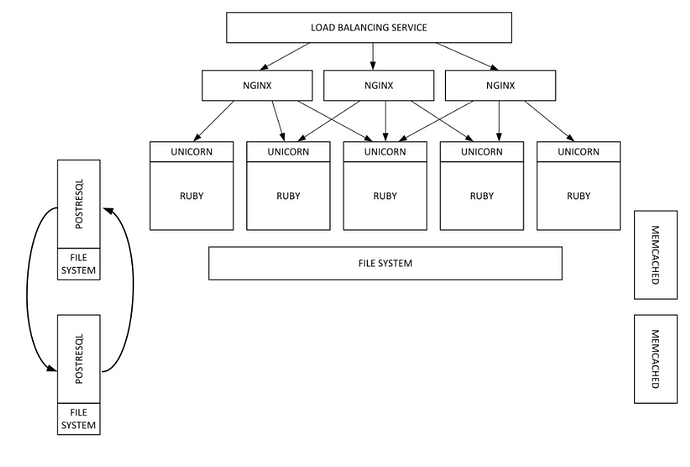

Calculating the server requirements

All figures calculated with servers running at 75% load at peak to provide some headroom.

Costing

Prices taken from Amazon Web Services, excluding data charges:

Load balancer: US$0.0294 / hour, $21.46 / month

c4.xlarge server (web, db): US$0.237 / hour, $173.01 / month

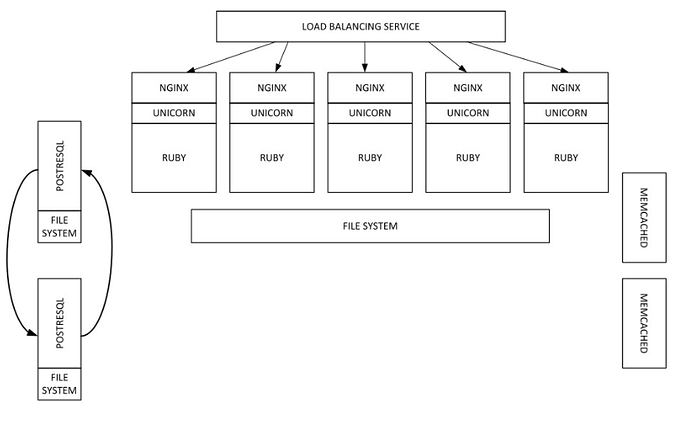

Two pilot cities

Our peak of 21 RPM is well within the server capability of 186 RPM (11% of total capacity).

In theory we could run this pilot off a single server.

However, I recommend running two web servers and two database servers. That provides us with redundancy in case one server fails and prepares our infrastructure to scale in the future.

Load balancer: $21.46 / month

4 servers (2 x web, 2 x DB): 4 x $173.01 = $692.04 / month

Total: $713.50

Whole of France

Our peak of 3,630 RPM could be served by 26 web servers (running at 75% capacity).

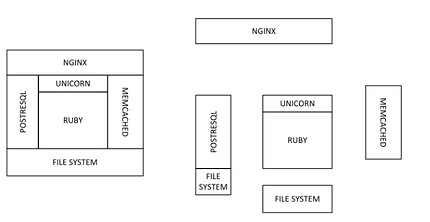

As a starting point, I propose the following architecture:

1 load balancer

26 web servers

2 database servers (the second as a hot standby)

1 delayed job worker

Load balancer: $21.46 / month

29 servers: $4498.26 / month

Total: $4519.72 / month

Notes

As noted above, we can get much greater performance out of one server (perhaps 2x) by working on optimising the application.

In the above estimate, I haven’t addressed database scaling. It’s likely that we’ll need far fewer web servers and far more resources dedicated to the database. However, this estimate serves as a starting point to examine the infrastructure cost of the full rollout of the project.

The cost has been based on AWS hosting in Australian Dollars. They differ between geographic regions and the hosting provider of your choice may charge more. Since this is a project supporting local infrastructure and sustainability, a French hosting company using renewable energy might be consideration.