Here’s my response to @Rachel, to keep the conversation going:

Thanks for the thoughtful feedback @Rachel . I’m going to try responding to all points, hopefully this is useful and not too tedious - bear with me! Regarding this point:

“From the early document, I’ve understood that the AI pilot would be for the current @devs team.”

The part of the ‘AI pilot plan’ that I’m trying to get started with this working group (non dev AI-assisted code contributions) is outlined in the ‘AI Principles & Pilot Testing’ document, in the section titled ‘Possible Future State’:

The document has been reviewed and approved for general discussion at the Stewardship Circle, and Nicolas synthesized Coop Circuit’s feedback a few weeks ago, in the original slack thread via this file: NoteGenevieveIA.md - Nuage CoopCircuits - there wasn’t any concern flagged at that point, but I appreciate you may or may not have been party to every aspect of that in-house discussion.

Per Nick’s comment on Slack, the UK instance has reviewed it too and explicitly given it a thumbs up. I also asked Maikel to review my post before I published it, and he approved it. So, just to say I have made every effort to gut-check this with people before making the offer public, but I appreciate the opportunity to respond to your thoughts and concerns also.

Regarding this point:

“But here I understand we want to experiment already with people who are not developers. I’m a bit concerned with that move.”

That’s understandable! I think this is the point I’m trying to make about developing “communities of practice": We shouldn’t just be ‘going it alone’ on this, but actively refining, co-developing, best practice and standard operating procedures that help to ensure the success of this work. That work is already underway. If you review Greg’s series of pull requests over the last couple of months, you’ll see that there is steady improvement in the quality of the AI generated code - as shown by dev responses on github:

From a period of frustration:

To increasing satfisfaction (obviously we shouldn’t expect this to be ttally linear process etc):

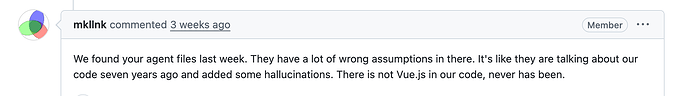

Currently, I think the most in-depth discussion of how we can evolve a really robust and useful community practice around the training of AI coding agents is currently taking place on github, around the need for well developed Agent.MD files - a convo to which Maikel has been actively contributing (thank you Maikel!):

In my view (and @maikel please push back on this, as you like) the above process is evidence that Greg (not a developer but a seasoned product owner, with technical skill) is actively helping OFN develop some best practices in the use of AI agents, and that that work is already bearing some initial fruit. Early days, but I think we can see a process of slow but steady improvement taking place (and everyone can assess this for themselves by following Greg’s commits on github -gbathree). And i think this suggests we should continue a methodical, time bounded exploration of this space.

So, to this point:

”From what I’ve seen elsewhere (including in Claude own’s code that got leaked) is that it is very important for the contributor to understand what the AI has done before submitting a PR. We need to review everything the AI has produced.'“

I hope it’s clear that we are not advocating that everyone just prompt Claude, cross their fingers, hope for the best, and pull the trigger. We are trying to design this process in an intentional way, to be methodical and iterative, conducted under the direction and guidance of OFN devs, in a way that lets collective learning accumulate. So on this point, we’re in full agreement:

I don’t think a power user with no dev knowledge is able to do that. That means that we will rely on the OFN core team to do that review.

On this point:

Is there budget to cover review and testing?

The short answer is yes. The above plan has been okayed by @Serenity_Hill and @genevieve , as falling under the ‘Macdoch budget’ and hence part of ‘core.’ There is a strategic element to this. In discussion with foundations such as Machoch and 11th Hour, Serenity and I are already fielding questions about OFN’s AI strategy - i.e. we need to have one, and would probably struggle to secure further significant support from these foundations if we can’t ultimatley articulate a smart, value aligned ways to negotiate the AI landscape, one way or another.

So this small time bounded experiment (I’d emphasise the work experiment) is our effort to explore one possible pathway, one that I think aligns with core OFN values, for the reasons described above in my original post.

Rather than eating up scarce resources on a plan we haven’t thought through or gut checked with the community at large, we see this as part of a methodical, staged-gated process that actually assists development of further funding resources, while also hopefully empowering our community in the ways described above. (I’ll let Serenity weigh in here re the funding / budget implications of this two month experiment, if she has thoughts to add.)

I’ll add too, there are already signs of this generating tangible value for Coop Circuits users / coop members. At the Stewardship Circle meeting this week, @Ingrid mentioned an S3 Bug that’s been creating headaches for users in France. At the Stewardship Circle we began what seemed likley to become a multi-week discussion around defining: “what does and doesn’t fall into ‘core’”, “does an S3 count?”, “will we need to move budget around”; “what meetings do we have to set up next” .

Meanwhile Emily and I falgged the issue during our co-working group with Greg and he picked it up, and a pull request was made the same day, which has since been approved, and is ready to merge:

https://github.com/openfoodfoundation/openfoodnetwork/pull/14175

Isn’t this the kind of thing we’d want to see more of? A leaner, more efficient OFN, where our instance managers and power users have the tools at their disposal to directly, efficiently and securely address the issues that are causing them pain, without having to wait on slow going and opaque budgetary and governance discussions to play out over multiple timezones, and fractional roles?

I hope this isn’t coming across as defensive (always a risk with long posts  ) I’m just trying to respond point by point, and address your legitimate concerns with evidence that shows we are trying to think through carefully and make every effort to engage the community in a thoughtful way, and that we do seem to be making some headway on replicable processes.

) I’m just trying to respond point by point, and address your legitimate concerns with evidence that shows we are trying to think through carefully and make every effort to engage the community in a thoughtful way, and that we do seem to be making some headway on replicable processes.

To your last point on Claude - I think this is a really meaty one, worthy of a lot of further discussion:

A note on our use of Claude: We see this as a temporary measure, and are keen to transition to use of open source and open weight AI models

Are we sure it will be easy to transition? Are there already documented experiences on that?

I feel instances in Europe must really be conscious of that choice, as they will have to defend it in their presentation in a context where Europe is all about sovereignty and moving away from big tech.

This is introducing a dependency towards Claude we are not able to manage the risk (how will their price evolve, their data center management…).

I’m aware Claude is already used in many library OFN is dependent on and that contributions made with Claude have already been accepted.

But here we are talking of actively promoting Claude’s model and making it the default tool for that pilot. That’s a big move, isn’t it

I think the short answer is that many of the answers to this question lie in the effective use of Agent.MD which are vendor-agnostic and usable by any coding agent on the market (they are just simple markdown files, at the end of the day). So it’s possible to run both Claude Code and Open Code off of the same Agent.MD, and have them both making contributions and updates as new learnings and processes are refined.

I guess that’s one direction we could go with this initial experiment - folks in Europe could try working with Open Code, while the rest of us continue with Claude Code for the time being. I think running two different tools in parallel would create more friction and slow progress in building the shared human skills and processes we need to build together - but it’s a direction worth considering.

Another option is a phased approach: run the initial working group with Claude Code, then Greg and I take some time to explore Open Code more seriously and we pick this up again in a few months with any interested folks in the EU. I think the current consensus is that Claude Code is by far the more performant tool, for right now. However, as you note, there have been significant leaks at Anthropic …. and this is a good thing for open source! All the open source AI labs now have access to parts of Anthropic’s secret source, so I think we should expect meaningful gains in the performance of open source coding agents over the next six months, based on what they learned from the Claude Code leaks.

Last point (sorry for the enormous post!) in Canada we are also grappling with a version of the same forces at work in Europe around tech sovereignty and reducing dependency on big tech. I was recently in touch with a Canadian AI firm called Evermore (https://evormore.ai/) who have built a niche explicitly focused on building Canadian tech sovereignty through the use of open source AI tooling - it’s a really interesting model, and I’m sure there are comparable providers in the EU focused on on-premise deployment of open source AI tools.

This is definitely a vector we should all be exploring, IMO. But my instinct is that the OFN community needs first to build a better collective understanding of the new AI tool ecosystems, and emerging best practice, before we can make informed assessments of the feasibility of more ambitious sovereign deployments of AI. This short two-month experiment is, in my view, our most expedient route to getting more of us using and understanding AI in concrete, practical ways. Personally, I’d rather we start here, build the foundational skills (human and agent), and then make the jump toward the more exciting, liberating and value-aligned possibilities that I think Evermore is indicative of,

In any case, let’s keep the conversation going! @Rachel if you’d be open to joining the exploratory call with greg and I next week, that would be great. Totally your call, but we’d be glad to have you there, if you can make it! Cheers

![]() We’ll follow up to schedule a short group call where you can ask questions and determine if you think the working group is a good fit for you. After that, if you express interest, we’ll send a brief form to collect some details on your objectives for the working group, and some background on what makes you a good fit for the work - then we’ll onboard 7 people, with others waitlisted for future opportunities.

We’ll follow up to schedule a short group call where you can ask questions and determine if you think the working group is a good fit for you. After that, if you express interest, we’ll send a brief form to collect some details on your objectives for the working group, and some background on what makes you a good fit for the work - then we’ll onboard 7 people, with others waitlisted for future opportunities.